本文使用三台机器作为搭建过程

角色 ip 主机名

Master+Node1 10...82 zy-k8s-01

Node2 10...91 zy-k8s-02

Node3 10...229 zy-k8s-03

1、 装linux

2、注意需要保证内核带有NAT模块,没有的话需要升级内核(这是自己编译的内核,带有NAT模块)

升级成功!

3、 /etc/resolv.conf配置dns

nameserver127.0.0.1

nameserver 9...120

nameserver 9...34

4、 保证机器有外网访问权限

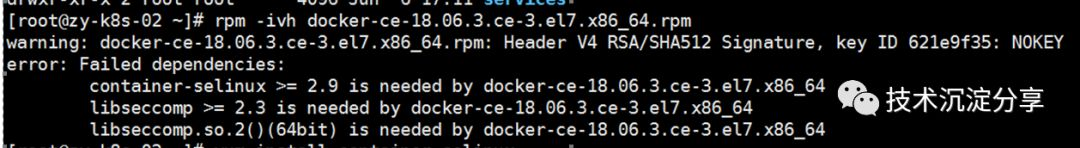

5、 安装docker-ce,提示没有container-selinux和libseccomp

yum installlibseccomp

yum installcontainer-selinux

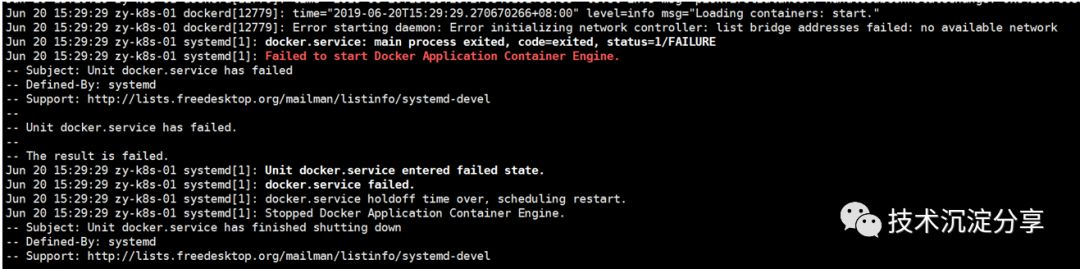

6、 systemctlstart docker启动docker

报错,由于dockerd绑定的是docker0网卡,因此需要手工创建docker0网卡

报错,由于dockerd绑定的是docker0网卡,因此需要手工创建docker0网卡

7、 创建docker0网卡

brctl addbrdocker0

ip addr add172.17.0.1/16 dev docker0

ip link set devdocker0 up

ip addr showdocker0

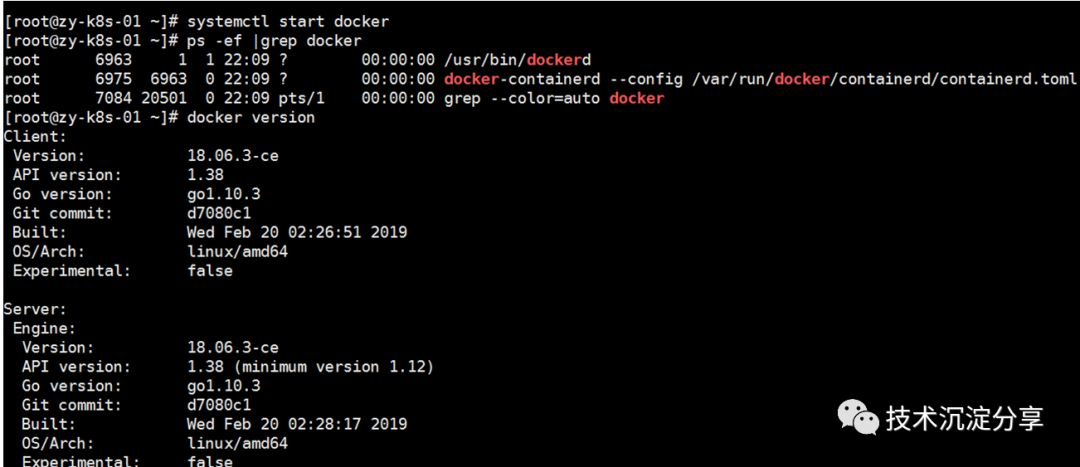

8、 再次启动docker

由于docker默认存储路径是根分区的/var/lib/docker/lib,存储空间太小。因此这里改成/data/docker/lib/docker目录下。

由于docker默认存储路径是根分区的/var/lib/docker/lib,存储空间太小。因此这里改成/data/docker/lib/docker目录下。

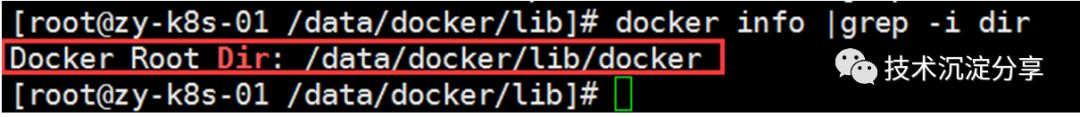

修改方式:

(1) stopdocker daemon

(2) 创建docker目录

cd /data/; mkdirdocker/lib -p

(3) rsync-avz /var/lib/docker /data/docker/lib

(4) mkdir-p /etc/systemd/system/docker.service.d

(5) vim /etc/systemd/system/docker.service.d/devicemapper.conf

内容如下:

[Service]

ExecStart=

ExecStart=/usr/bin/dockerd–graph=/data/docker/lib/docker

(6) systemctldaemon-reload; systemctl restart docker

(7) dockerinfo | grep -i dir

编译K8S组件,本文使用到的kubectl/kubelet/api-server等组件基于K8S1.12版本源码编译生成,编译指引参考网上指引

编译K8S组件,本文使用到的kubectl/kubelet/api-server等组件基于K8S1.12版本源码编译生成,编译指引参考网上指引

将生成的kube* 所有组件copy至所有node的/usr/local/bin目录下。

创建TLS证书和秘钥

https://jimmysong.io/kubernetes-handbook/practice/create-tls-and-secret-key.html

创建kubeconfig文件

https://jimmysong.io/kubernetes-handbook/practice/create-kubeconfig.html

创建高可用etcd集群

https://jimmysong.io/kubernetes-handbook/practice/etcd-cluster-installation.html

本文使用的etcd也是源码编译而来,主要是为了以后方便自定义和调试。

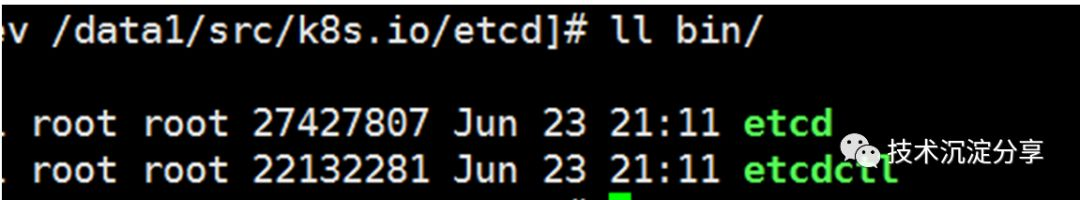

编译etcd

git clone https://github.com/bigbang009/etcd.git

cd /data1/src/k8s.io/etcd;./build

执行完编译后,在当前目录会有bin目录生成,包含了两个文件:etcd和etcdctl

将两个二进制文件copy至每个node的/usr/local/bin目录下。

将两个二进制文件copy至每个node的/usr/local/bin目录下。

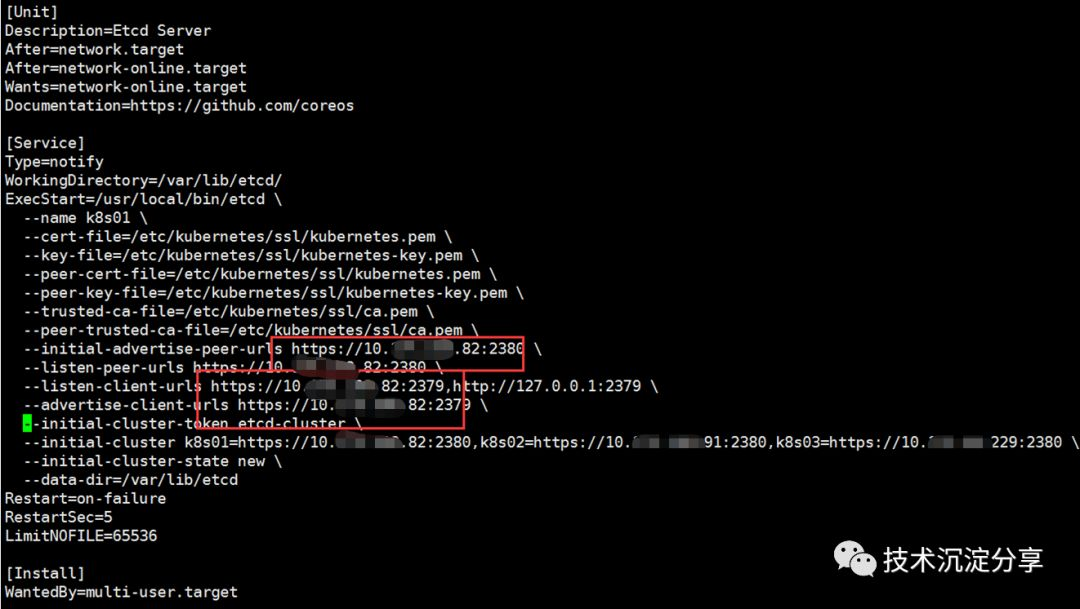

另外,关于etcd环境变量配置文件/etc/etcd/etcd.conf,本文在使用时,启动etcd一直报conflictingenv name ETCD_NAME,导致etcd启动失败(原因未找到,看了下etcd源码报错位置,环境变量已经有相同的名字,但实验从环境变量获取时,并没有ETCD_NAME变量名,不知道哪里出的问题)。本文不使用环境变量替换。因此对应的etcd.service内容如下:

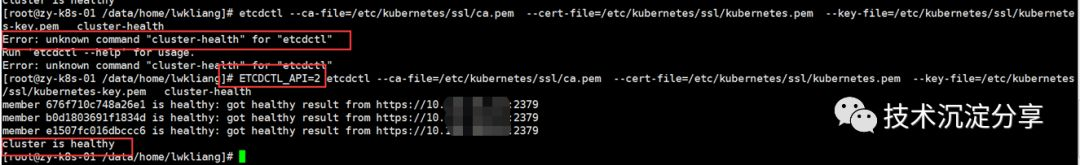

至此,ETCD集群搭建完成。

至此,ETCD集群搭建完成。

部署master节点

Master节点主要包含组件列表如下:

kube-apiserver

kube-scheduler

kube-controller-manager

创建 kube-apiserver的service配置文件

service配置文件/usr/lib/systemd/system/kube-apiserver.service内容

[Unit]

Description=KubernetesAPI Service

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

After=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/apiserver

ExecStart=/usr/local/bin/kube-apiserver\

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_ETCD_SERVERS \

$KUBE_API_ADDRESS \

$KUBE_API_PORT \

$KUBELET_PORT \

$KUBE_ALLOW_PRIV \

$KUBE_SERVICE_ADDRESSES \

$KUBE_ADMISSION_CONTROL \

$KUBE_API_ARGS

Restart=on-failure

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

/etc/kubernetes/config文件的内容为:

#kubernetessystem config

#

#The followingvalues are used to configure various aspects of all

#kubernetes services,including

#

#kube-apiserver.service

#kube-controller-manager.service

#kube-scheduler.service

#kubelet.service

#kube-proxy.service

#logging tostderr means we get it in the systemd journal

KUBE_LOGTOSTDERR=”–logtostderr=true”

#journal messagelevel, 0 is debug

KUBE_LOG_LEVEL=”–v=0”

#Should thiscluster be allowed to run privileged docker containers

KUBE_ALLOW_PRIV=”–allow-privileged=true”

#How thecontroller-manager, scheduler, and proxy find the apiserver

KUBE_MASTER=”–master=http://10.175.108.82:8080”

apiserver配置文件/etc/kubernetes/apiserver内容为:

##kubernetessystem config

##The followingvalues are used to configure the kube-apiserver

##The address onthe local server to listen to.

KUBE_API_ADDRESS=”–advertise-address=10.175.108.82–bind-address=10.175.108.82 –insecure-bind-address=10.175.108.82”

##The port on thelocal server to listen on.

#KUBE_API_PORT=”–port=8080”

#Port minionslisten on

#KUBELET_PORT=”–kubelet-port=10250”

#Comma separatedlist of nodes in the etcd cluster

KUBE_ETCD_SERVERS=”–etcd-servers=https://10.175.108.82:2379,https://10.175.108.91:2379,https://10.175.99.229:2379”

##Address rangeto use for services

KUBE_SERVICE_ADDRESSES=”–service-cluster-ip-range=10.254.0.0/16”

##defaultadmission control policies

KUBE_ADMISSION_CONTROL=”–admission-control=ServiceAccount,NamespaceLifecycle,NamespaceExists,LimitRanger,ResourceQuota”

##Add your own!

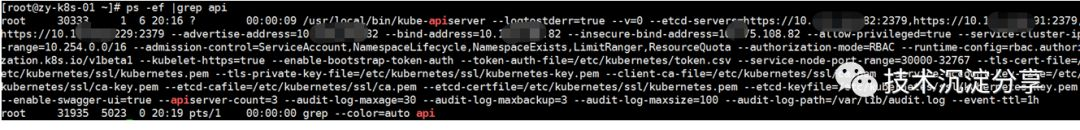

KUBE_API_ARGS=”–authorization-mode=RBAC–runtime-config=rbac.authorization.k8s.io/v1beta1 –kubelet-https=true –enable-bootstrap-token-auth –token-auth-file=/etc/kubernetes/token.csv–service-node-port-range=30000-32767–tls-cert-file=/etc/kubernetes/ssl/kubernetes.pem–tls-private-key-file=/etc/kubernetes/ssl/kubernetes-key.pem–client-ca-file=/etc/kubernetes/ssl/ca.pem–service-account-key-file=/etc/kubernetes/ssl/ca-key.pem–etcd-cafile=/etc/kubernetes/ssl/ca.pem–etcd-certfile=/etc/kubernetes/ssl/kubernetes.pem–etcd-keyfile=/etc/kubernetes/ssl/kubernetes-key.pem –enable-swagger-ui=true–apiserver-count=3 –audit-log-maxage=30 –audit-log-maxbackup=3–audit-log-maxsize=100 –audit-log-path=/var/lib/audit.log–event-ttl=1h”

启动kube-apiserver

systemctldaemon-reload

systemctl enablekube-apiserver

systemctl startkube-apiserver

systemctl statuskube-apiserver

配置和启动 kube-controller-manager

配置和启动 kube-controller-manager

创建 kube-controller-manager的serivce配置文件

文件路径/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=KubernetesController Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/controller-manager

ExecStart=/usr/local/bin/kube-controller-manager\

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_CONTROLLER_MANAGER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

配置文件/etc/kubernetes/controller-manager

#The followingvalues are used to configure the kubernetes controller-manager

#defaults fromconfig and apiserver should be adequate

#Add your own!

KUBE_CONTROLLER_MANAGER_ARGS=”–address=127.0.0.1–service-cluster-ip-range=10.254.0.0/16 –cluster-name=kubernetes –cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem–cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem –service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem–root-ca-file=/etc/kubernetes/ssl/ca.pem –leader-elect=true”

启动 kube-controller-manager

systemctldaemon-reload

systemctl enablekube-controller-manager

systemctl startkube-controller-manager

systemctl statuskube-controller-manager

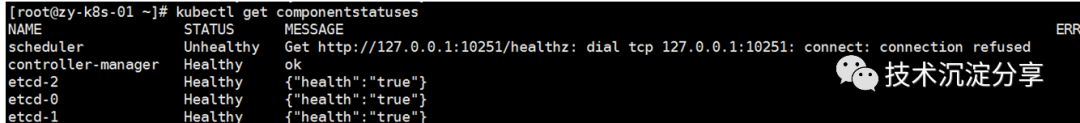

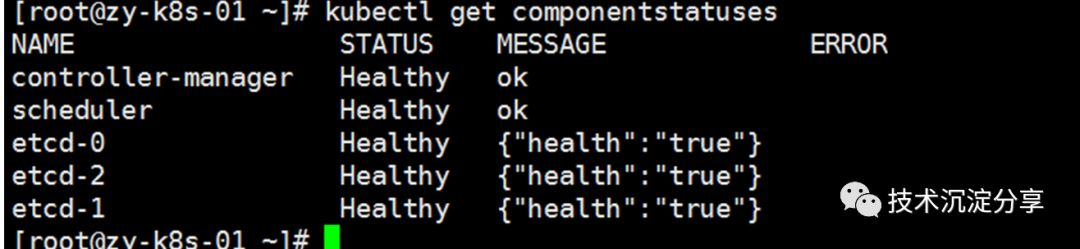

kubectl get componentstatuses

配置和启动 kube-scheduler

配置和启动 kube-scheduler

创建 kube-scheduler的serivce配置文件

文件路径/usr/lib/systemd/system/kube-scheduler.service。

[Unit]

Description=KubernetesScheduler Plugin

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/scheduler

ExecStart=/usr/local/bin/kube-scheduler\

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_SCHEDULER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

配置文件/etc/kubernetes/scheduler。

###

#kubernetesscheduler config

#default configshould be adequate

#Add your own!

KUBE_SCHEDULER_ARGS=”–leader-elect=true–address=127.0.0.1”

启动 kube-scheduler

systemctldaemon-reload

systemctl enablekube-scheduler

systemctl startkube-scheduler

systemctl statuskube-scheduler

验证 master 节点功能。如下图表示scheduler、controller-manager、etcd服务正常

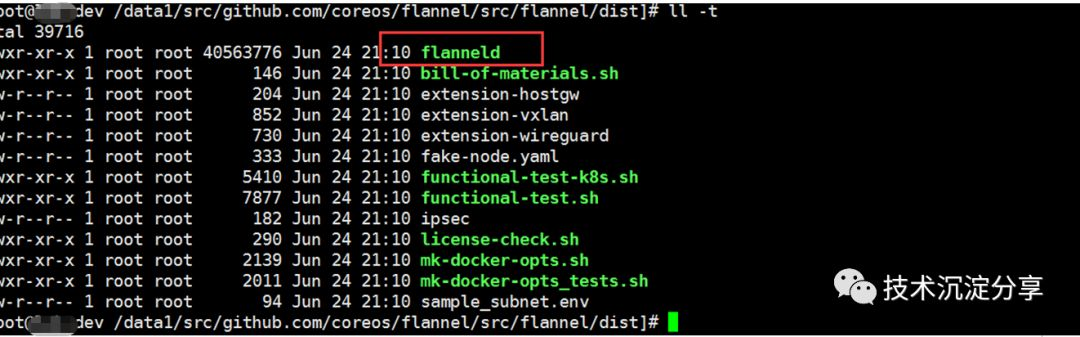

本文使用flannel作为K8S集群的网络插件

本文使用flannel作为K8S集群的网络插件

同样,本文从github上clone源码进行编译,编译指引,编译完成之后在dist目录下生成对应的二进制文件。

https://github.com/coreos/flannel/blob/master/Documentation/building.md

service配置文件/usr/lib/systemd/system/flanneld.service。

service配置文件/usr/lib/systemd/system/flanneld.service。

[Unit]

Description=Flanneldoverlay address etcd agent

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/etc/sysconfig/flanneld

EnvironmentFile=-/etc/sysconfig/docker-network

ExecStart=/usr/local/bin/flanneld\

-etcd-endpoints=${FLANNEL_ETCD_ENDPOINTS} \

-etcd-prefix=${FLANNEL_ETCD_PREFIX} \

$FLANNEL_OPTIONS

ExecStartPost=/usr/libexec/flannel/mk-docker-opts.sh-k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker

Restart=on-failure

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

/etc/sysconfig/flanneld配置文件:

#Flanneldconfiguration options

#etcd urllocation. Point this to the server whereetcd runs

FLANNEL_ETCD_ENDPOINTS=”https://10.175.108.82:2379,https://10.175.108.91:2379,https://10.175.99.229:2379” #etcd configkey. This is the configuration key thatflannel queries

#For addressrange assignment

FLANNEL_ETCD_PREFIX=”/kube-centos/network”

#Any additionaloptions that you want to pass

FLANNEL_OPTIONS=”-etcd-cafile=/etc/kubernetes/ssl/ca.pem-etcd-certfile=/etc/kubernetes/ssl/kubernetes.pem-etcd-keyfile=/etc/kubernetes/ssl/kubernetes-key.pem -iface=eth1

systemctldaemon-reload

systemctl enableflanneld

systemctl startflanneld

systemctl statusflanneld 在etcd中创建网络配置

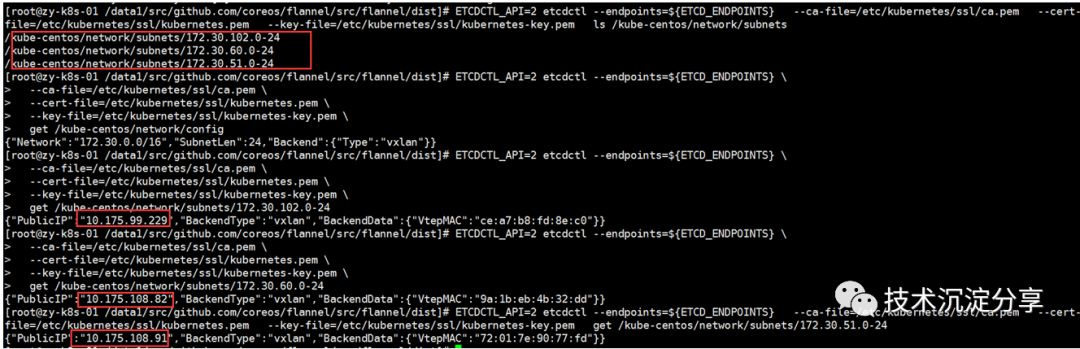

执行下面的命令为docker分配IP地址段【在master上执行即可】。

在master上执行一下指令:

ETCDCTL_API=2etcdctl –endpoints=${ETCD_ENDPOINTS} –ca-file=/etc/kubernetes/ssl/ca.pem –cert-file=/etc/kubernetes/ssl/kubernetes.pem –key-file=/etc/kubernetes/ssl/kubernetes-key.pem ls /kube-centos/network/subnets

ETCDCTL_API=2etcdctl–endpoints=https://10...82:2379,https://10...91:2379,https://10...229:2379 –ca-file=/etc/kubernetes/ssl/ca.pem –cert-file=/etc/kubernetes/ssl/kubernetes.pem –key-file=/etc/kubernetes/ssl/kubernetes-key.pem mkdir /kube-centos/network

ETCDCTL_API=2etcdctl–endpoints=https://10...82:2379,https://10...91:2379,https://10...229:2379 –ca-file=/etc/kubernetes/ssl/ca.pem –cert-file=/etc/kubernetes/ssl/kubernetes.pem –key-file=/etc/kubernetes/ssl/kubernetes-key.pem mk /kube-centos/network/config’{“Network”:”172.30.0.0/16”,”SubnetLen”:24,”Backend”:{“Type”:”vxlan”}}’ 现在查询etcd中的内容可以看到:

如果可以查看到以上内容证明flannel已经安装完成

部署NODE

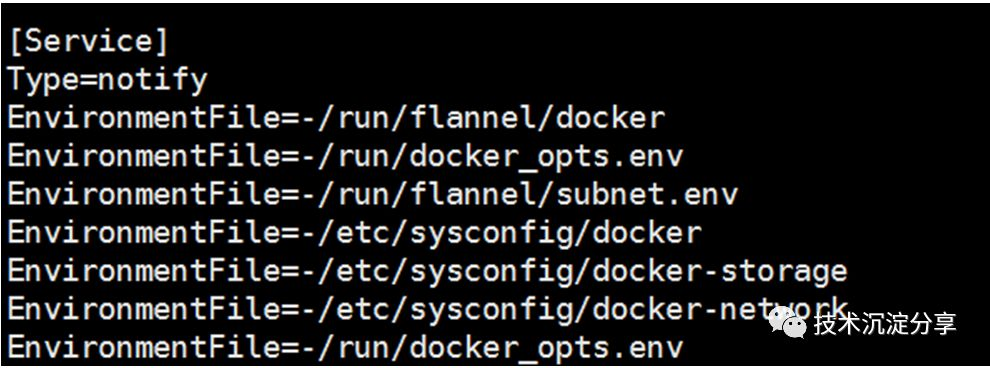

先将所有node上的docker.service文件添加一下内容。

EnvironmentFile=-/run/flannel/docker

EnvironmentFile=-/run/docker_opts.env

EnvironmentFile=-/run/flannel/subnet.env

EnvironmentFile=-/etc/sysconfig/docker

EnvironmentFile=-/etc/sysconfig/docker-storage

EnvironmentFile=-/etc/sysconfig/docker-network

EnvironmentFile=-/run/docker_opts.env

然后重启docker,systemctl daemon-reload;systemctl restartdocker

安装和配置kubelet

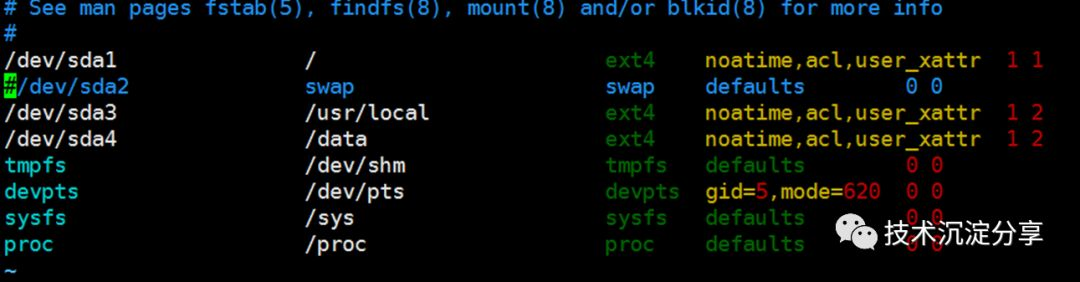

K8s1.8集群必须关掉swap,通过修改/etc/fstab将swap系统注释掉。

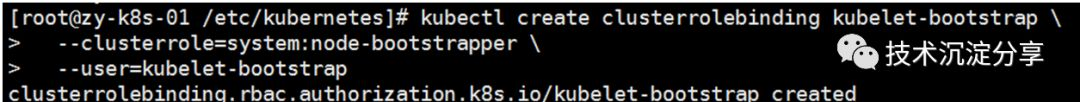

kubelet 启动时向kube-apiserver 发送 TLS bootstrapping 请求,需要先将 bootstrap token 文件中的kubelet-bootstrap 用户赋予 system:node-bootstrapper cluster 角色(role),然后 kubelet 才能有权限创建认证请求(certificatesigning requests),在master上执行以下命令:

kubectl createclusterrolebinding kubelet-bootstrap –clusterrole=system:node-bootstrapper –user=kubelet-bootstrap

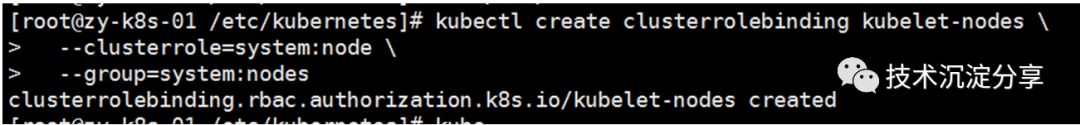

kubelet 通过认证后向kube-apiserver 发送 register node 请求,需要先将 kubelet-nodes 用户赋予system:node cluster角色(role) 和 system:nodes 组(group),然后 kubelet 才能有权限创建节点请求,在master上执行以下命令:

kubelet 通过认证后向kube-apiserver 发送 register node 请求,需要先将 kubelet-nodes 用户赋予system:node cluster角色(role) 和 system:nodes 组(group),然后 kubelet 才能有权限创建节点请求,在master上执行以下命令:

kubectl createclusterrolebinding kubelet-nodes \

–clusterrole=system:node \

–group=system:nodes

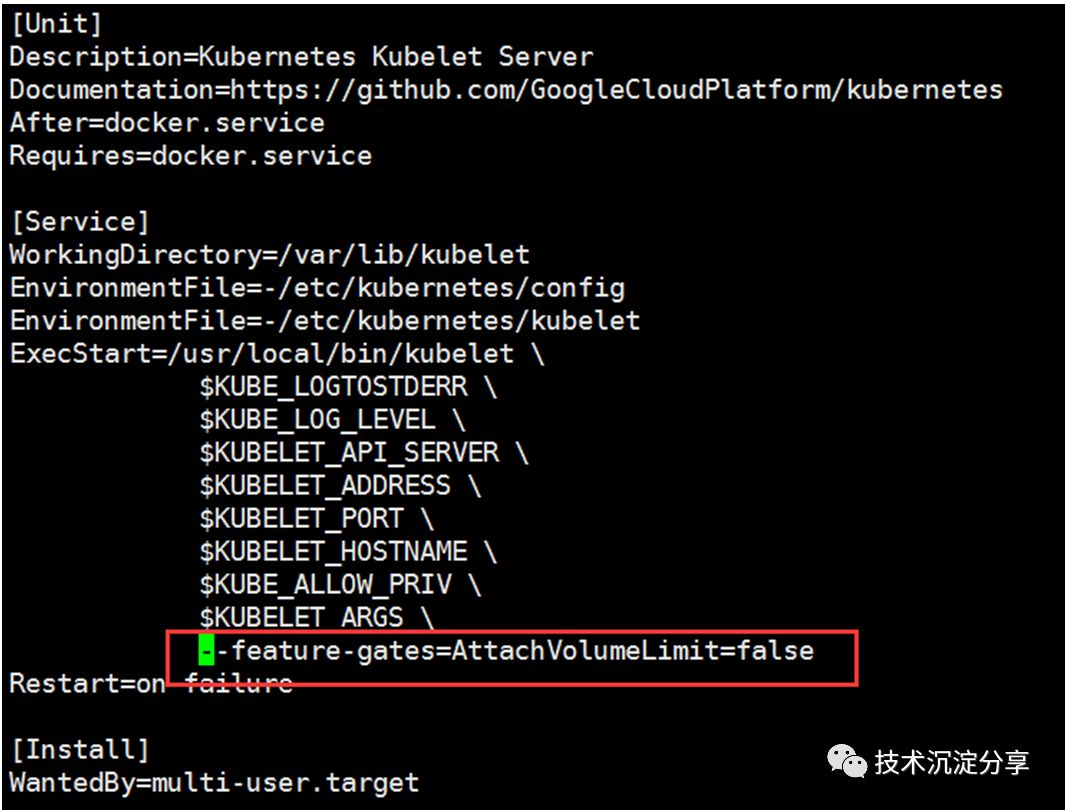

创建kubelet的service配置文件

文件位置/usr/lib/systemd/system/kubelet.service,内容如下:

[Unit]

Description=KubernetesKubelet Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/kubelet

ExecStart=/usr/local/bin/kubelet\

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBELET_API_SERVER \

$KUBELET_ADDRESS \

$KUBELET_PORT \

$KUBELET_HOSTNAME \

$KUBE_ALLOW_PRIV \

$KUBELET_POD_INFRA_CONTAINER \

$KUBELET_ARGS

Restart=on-failure

[Install]

WantedBy=multi-user.target

kubelet的配置文件/etc/kubernetes/kubelet。其中的IP地址更改为你的每台node节点的IP地址

注意:在启动kubelet之前,需要先手动创建/var/lib/kubelet目录mkdir -p/var/lib/kubelet。

下面是kubelet的配置文件/etc/kubernetes/kubelet:

##kuberneteskubelet (minion) config

#The address forthe info server to serve on (set to 0.0.0.0 or “” for all interfaces)

KUBELET_ADDRESS=”–address=172.20.0.113”

#The port forthe info server to serve on

#KUBELET_PORT=”–port=10250”

##You may leavethis blank to use the actual hostname

KUBELET_HOSTNAME=”–hostname-override=172.20.0.113”

#location of theapi-server

#COMMENT THIS ONKUBERNETES 1.8+

KUBELET_API_SERVER=”–api-servers=http://172.20.0.113:8080”

#podinfrastructure container

KUBELET_POD_INFRA_CONTAINER=”–pod-infra-container-image=jimmysong/pause-amd64:3.0”

#Add your own!

KUBELET_ARGS=”–cgroup-driver=systemd–cluster-dns=10.254.0.2 –experimental-bootstrap-kubeconfig=/etc/kubernetes/bootstrap.kubeconfig–kubeconfig=/etc/kubernetes/kubelet.kubeconfig –require-kubeconfig–cert-dir=/etc/kubernetes/ssl –cluster-domain=cluster.local –hairpin-modepromiscuous-bridge –serialize-image-pulls=false”

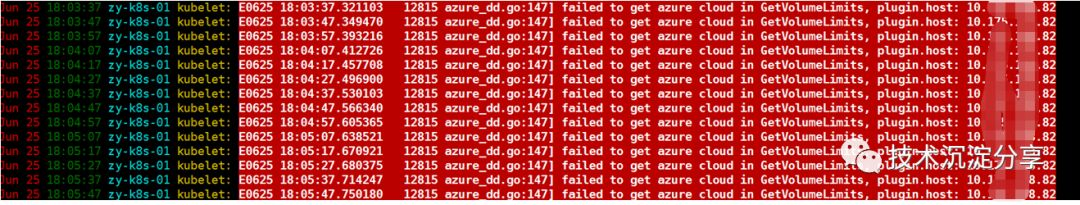

经过查询资料,发现是1.12因为在v1.12中的kubelet 的AttachVolumeLimit导致的,禁用

AttachVolumeLimit

启动kublet

启动kublet

systemctldaemon-reload

systemctl enablekubelet

systemctl startkubelet

systemctl statuskubelet

配置 kube-proxy

建 kube-proxy 的service配置文件

文件路径/usr/lib/systemd/system/kube-proxy.service。

[Unit]

Description=KubernetesKube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/proxy

ExecStart=/usr/local/bin/kube-proxy\

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_PROXY_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

kube-proxy配置文件/etc/kubernetes/proxy

#kubernetes proxyconfig

#default configshould be adequate

#Add your own!

KUBE_PROXY_ARGS=”–bind-address=nodeIP–hostname-override=nodeIP –kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig–cluster-cidr=10.254.0.0/16”

systemctldaemon-reload

systemctl enablekube-proxy

systemctl startkube-proxy

systemctl statuskube-proxy

三台node都执行上述命令。中途出现异常导致kube

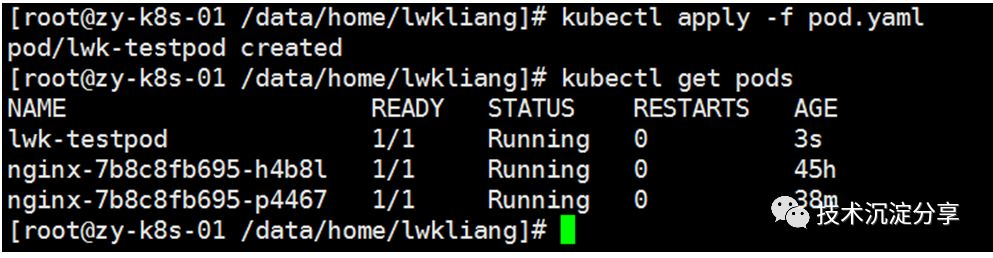

至此K8S集群搭建完成,创建一个pod试下

Yaml文件名pod.yaml,内容如下:

apiVersion: v1

kind: Pod

metadata:

labels:

test: lwk-testpod

name: lwk-testpod

spec:

containers:

- name: test-pod1

image: dockerimage.isd.com/lwk/test/test:v1.0.17

执行kubectl apply -f pod.yaml

在master上查看当前集群node节点信息以及POD信息

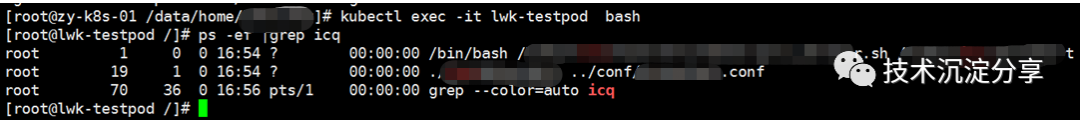

再进入pod看下:

再进入pod看下:

kubectl exec -itlwk-testpod bash

Perfect!

总结一下:

本文搭建过程也参考了网上一些其他指引;

本文主要通过二进制方式搭建K8S集群,了解二进制搭建的过程以及涉及到的K8S各个组件;通过二进制搭建,后续可方便调试其他功能;

另外对于小组内小伙伴,能方便的在上面开发operator、controller等功能,提升对K8S的深入了解,并提高go开发能力;

为广大K8S爱好者提供部署指引。